Subscribe to discover Om’s fresh perspectives on the present and future.

Om Malik is a San Francisco based writer, photographer and investor. Read More

Adobe has to embrace AI and become an even better fast follower in order to survive & thrive in the future.

Every year at its annual launch fest, the Adobe Max, Adobe announces enhancements to its flagship software products. I keep an eye on photography-related offerings — Adobe Photoshop and Adobe Lightroom. This year’s product enhancements tapped into Adobe’s “artificial intelligence” technology, Adobe Sensei.

The new “Sensei” powered features will allow Adobe’s software to do Photo Restoration that can eliminate scratches and other minor imperfections on old photographs) and Select People, aka detect a person within a photograph, and then create masks specific to their facial skin, body skin, eyebrow, iris, pupil, lips, teeth, mouth, and hair. There is a massive improvement in the ability to make detailed selections of complex objects — say, the hair of an Icelandic horse. The list of features is long and impressive, but the underlying theme — AI is helping automate complex and repetitive tasks. (Read my column in The Spectator, Why we should learn to stop worrying and learn to love AI.)

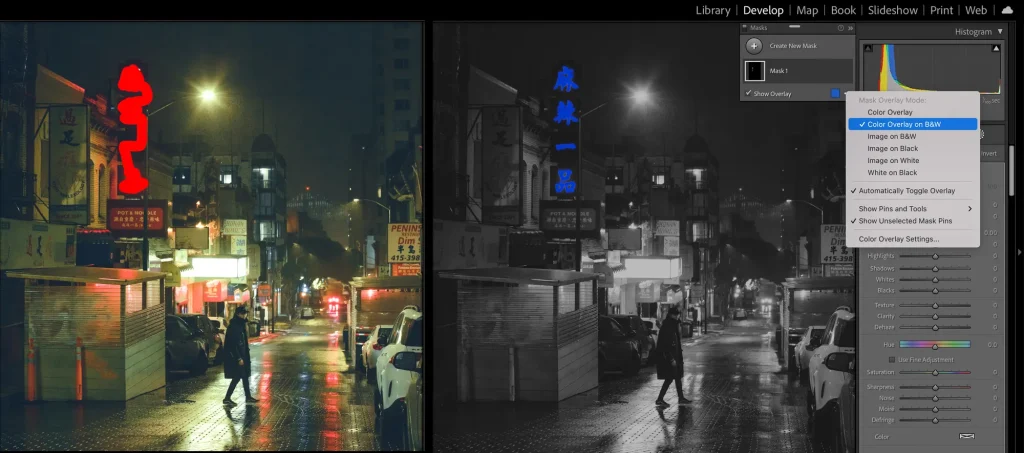

I have been using the new software for about ten days. My workflow hasn’t changed — I use the camera to capture the same way as I always have. My use of color (or lack thereof) isn’t very different. What’s different is the ability to select and mask complex objects — a bird or a tree, for example — and then separate them from the background or push them into a distance. As someone who relies heavily on micro-contrast and luminosity-based depth creation, I welcome these new enhancements.

Thus far, Adobe has taken hesitant steps in using machine learning and artificial intelligence in its creative suite. And if I am being generous, many of those attempts were mediocre. Over the past 24 months, you could see significant changes coming to the software. Sky replacement tools, Sensei search, content-aware fill, object selection, an array of neural filters, and enhanced details were some of the earlier efforts that improved over time. However, the latest update indicates that Adobe is likely pushing more “AI” into the core Creative Cloud offerings.

As a Photoshop user, I can attest that these complex selections typically take a long time and painstaking effort. Selections that take a better part of an hour are achieved in seconds, and the editing is easier and faster. And having used their AI improvements, you can see it is different.

As I have said — AI is for augmenting our capabilities.

Impressive as they are, much of what Adobe has announced were features pioneered and (previously) launched by many upstarts, including Skylum and Topaz Labs. Skylum, a Kyiv-based company, embraced machine learning and artificial intelligence early and has been pushing the envelope for almost five years now. Even with its might, Adobe couldn’t ignore what the new kids on the block were doing.

They have challenged the lumbering giant, Adobe. Skylum launched its Neo AI-enhanced NEO software, and Adobe is nowhere close to even copying its features — and it doesn’t have to, as I explain later. I am a fan of Skylum’s Luminar software — for a simple reason: they push the envelope and, more importantly, get Adobe out of its slumber.

Startups (and upstarts) such as Skylum, with their nimble processes and willingness to embrace new technology, have disrupted the status quo. If not for the challenge from Snap or Instagram, Facebook wouldn’t have moved aggressively into the visual and video realms. With a demonic ruthlessness, Apple continues to Sherlock many “apps” that smaller and independent software developers have dreamed up. Otherwise, we would all be dealing with mediocrity resulting from “features by committee.”

As I have previously outlined in The Grand Asparagus Theory, you have to be on trend, or else you will be left behind.

If it is asparagus season, don’t be surprised to see restaurants across the spectrum start offering asparagus dishes as part of their seasonal menu. Truffles are in season, well, you get Truffles on everything, including the ice cream. If you don’t, then the customer will go to the other place, and you lose a chance to make money. The same holds true in the world of technology — big companies have to serve whatever is in season. Any new behavior which becomes popular and gets engagement, and steals attention from a big platform is viewed as a threat.

The harsh reality of the technology industry in 2022 is that no one seems to care who invents: instead, it is about who puts a feature in front of the largest number of people and how fast.

Good incumbents, if they have to retain their preeminence, have to be great fast-followers. If not, then they tend to be left behind. Adobe mostly ignored Figma and Canva, two upstarts, only to realize it was losing a generation of customers. Ultimately, the company is spending $20 billion to buy Figma. (Related: What the Figma acquisition means for Adobe’s Future/The Spectator.)

As it charters Figma and the cloud, Adobe has a bigger dilemma — not losing its audience on its core money-making franchises such as Photoshop and Illustrator. They bring in subscription dollars. “Subscriptions” and “Cloud” are the magic words to keep stock prices flying high. High stock prices keep investors from meddling too much in a company’s affairs. If stock prices decline, investors start asking tough questions.

Figma is a good wake-up call for the company. It can’t make the same mistake of falling into a slumber, especially about the products that goose up its revenues the most — creative tools. It can’t have people start looking at Affinity, Skylum, and countless others as viable options. Adobe has no option but to become a better “Fast Follower.”

***

This is not easy for a company its size, but it is possible. And it is possible to do it well, as Adobe has shown with the effective use of its Sensei AI technology. Sensei works because Adobe can access a large corpus of images and other visual data to inform its algorithms. It can calibrate its systems based on the data and, in turn, offer multiple applications of Sensei. The virtual circle of data informing algorithms is where the large companies win over the upstarts.

The upstarts lack that scale and almost always depend on outside resources. And even though they come up with unique features, sadly, their universe is smaller. The greatest strength of incumbents is their ability to aggregate things and offer them at scale to more people.

Silicon Valley (and the tech world at large) is currently obsessed with generative AI and how it will reshape the visual data landscape. It is one of the biggest shifts in how we consume, create and recreate the information. Some have called for this to end Adobe and its hegemony. I am not so sure. To make Dall-e, MidJourney, and Stable Diffusion into products that normals can put to everyday use and, more importantly, those who will pay for them and deliver them at scale remains to be seen.

OpenAI, Stable Diffusion, and others need a lot of very expensive cloud resources, which costs money. Adobe, which just had a record quarter, has enough money to pay and scale those resources. It has access to the end market to deliver the “generative AI” in acceptable drips and drabs for the non-cutting edge customer.

The biggest thing we forget in Silicon Valley is that rest of the world takes much longer to adopt cutting-edge technologies — and if you have enough money, as an incumbent, you can slouch your way to the future.

Adobe has used its scale, size, and market reach to put AI in the hands of more people than all of its competitors combined. After ten days of using its new products, I can safely say — I am okay with Adobe’s lateness, even if it sets me back by a whopping two hundred and fifty bucks a year.

November 1, 2022. San Francisco

Additional Reading: