Subscribe to discover Om’s fresh perspectives on the present and future.

Om Malik is a San Francisco based writer, photographer and investor. Read More

Pop some popcorn. Put some butter. Add some salt. Because opportunists (politicians) are pointing their muskets at villains (tech bros), using children’s welfare as the ammunition. In case you were wondering, I am talking about the battle between New Mexico AG and Silicon Valley’s villain in chief.

The next bout is on May 4. So mark your calendars. Why?

This week two verdicts came in quick succession. First, a New Mexico jury ordered Meta to pay $375 million for knowingly enabling child predators on Instagram and Facebook. Then, a Los Angeles jury found Meta and YouTube negligent for designing platforms that addicted a young woman who first used YouTube at age six and Instagram at nine.

On the surface this is big win for ambulance chasers. However it could be much bigger if politicians actually have the best intentions that go beyond winning the next elections. History tells me, they are what I said they are, opportunists.

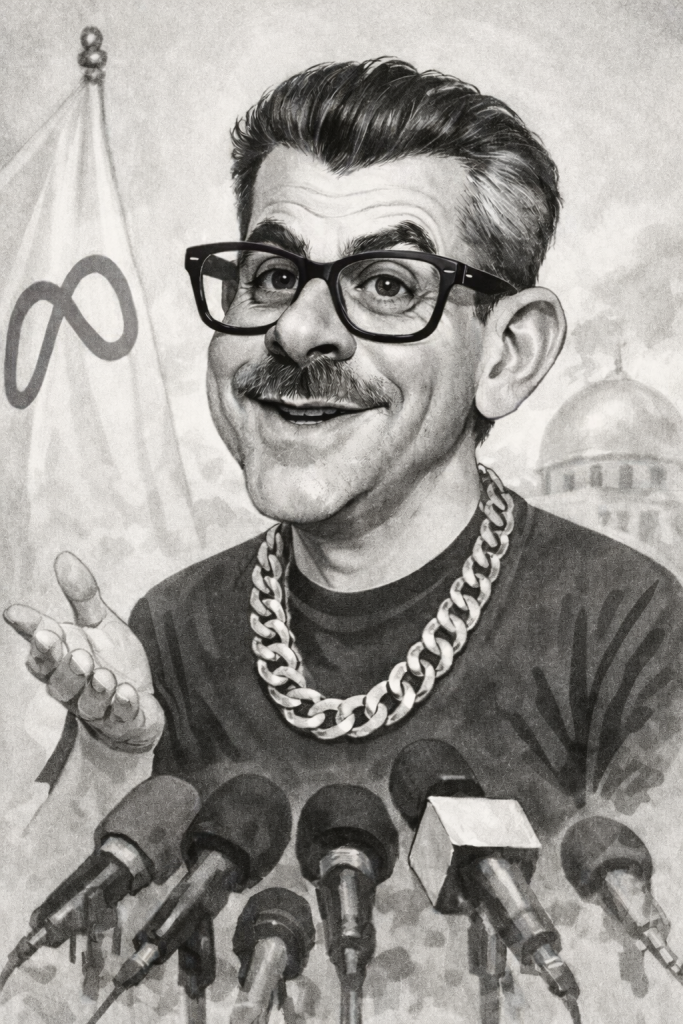

Let’s be honest about what New Mexico AG is. He is an elected official who identified the most despised man in American technology, a category that has never been more unpopular, and filed suit. Zuckerberg is the perfect patsy. Ian Fleming couldn’t have created a more perfect caricature of a villain for our post social age. Weird. Awkwardly dressed to appear cool. Black AR glasses.

Rich, arrogant, visibly indifferent to the damage his products caused, and now on record in two courtrooms defending decisions his own safety teams told him were harming children. The internal documents that came out in both trials showed the same pattern: engineers and safety researchers raising alarms for years, recommendations made and ignored, Zuckerberg choosing growth. None of this is new. I wrote about Facebook’s growth-at-any-cost DNA back in 2018. The pattern has not changed. Only the courtroom has.

New Mexico AG smelled blood. A politician at the end of the day, doing what politicians do. Scoring points against the current villain in chief, building a reputation on the wreckage of someone else’s failure, and calling it justice. The “independent monitor” he is asking for is the tell. And that is not how change happens. That is a patronage job dressed as accountability. That said, underneath the political theater, the structural demands are real. And if a judge grants even a portion of them, they could change daily life for two billion people.

Think about what your daily experience on Instagram or Facebook or TikTok or YouTube actually is. You think you made some choices. Pat yourself on the back. You have been fooled into thinking that you were the one making the choices. Now, let’s get real.

You open the app and a feed appears. Like I did. This morning. I went to Instagram to see what my “pen friends” were doing. I didn’t see a damn thing. What I saw, I didn’t choose. Neither do you. You see what they want you to see.

An algorithm shows you what it wants to show you, trained on everything you have ever paused on, liked, or watched past the halfway point. It is the ultimate illusion, where perception is conflated for reality. It has one job: keep you there. The most reliable way to do that, as the internal research showed and as the companies knew, is to surface content that provokes anxiety, outrage, desire, or envy. Those are the emotions that generate engagement. Engagement brings in the moolah. The loop is the product. I called this trap back in 2018. The feeds have only gotten more sophisticated since.

Layer in the design features built around that loop. Infinite scroll, so there is never a natural stopping point. Autoplay, so the next thing starts before you have decided you want it. Notifications calibrated to pull you back at the moment your attention drifts elsewhere. Variable reward, the unpredictable appearance of a like, a comment, a new follower, built like a slot machine. None of this happened by accident. All of it was tested, measured, and optimized.

This is what the courts are now calling a design defect. And to understand why that matters, you need to understand the wall that has protected these companies for thirty years.

Section 230 of the Communications Decency Act, passed in 1996, is the founding legal pillar of the modern internet. It says platforms cannot be held liable for content posted by their users. It is why Facebook is not responsible for a defamatory post, why YouTube is not liable for a radicalization video, why the whole architecture of user-generated content was able to exist at scale. Every time someone tried to sue a social platform for harms caused by what users posted, Section 230 was the wall. Case dismissed.

The Los Angeles plaintiffs found a door in that wall. The jurors were specifically instructed not to consider the content Kaley saw on the platforms. Not because it was irrelevant to her harm, but because content is where Section 230 lives. As NPR reported, the lawyers pursued a case of defective design precisely to get around the high bar set by Section 230.

The entire case was built around the design. Infinite scroll, autoplay, algorithmic feeds, notification systems. Those are not user posts. Those are the company’s own engineering decisions, made in their labs, tested on their servers, optimized by their employees. CBS News put it plainly: this case centered around how the apps are designed, not the content itself. Section 230 does not protect you from your own product choices.

The jury answered yes to seven specific questions per defendant. Were they negligent in the design or operation of their platforms? Was that negligence a substantial factor in causing harm? Did they fail to adequately warn users? And did they act with malice, oppression, or fraud? That last finding triggered punitive damages.

The pipe (aka the Social Media platform), not what flows through it. That argument won and opened new legal ground that can change everything that comes next.

If the design is the defect, the remedy is redesign. Not a warning label. Not a terms of service update. The product itself has to change.

Not surprisingly, I have some suggestions. Actually pretty simple ones that even politicians can grok.

Start with defaults. Right now every addictive feature ships on by default. Flip that. Ship the product in its least addictive form. Chronological feed. No autoplay. Scroll that ends. Notifications off until you choose otherwise. Every addictive feature requires an explicit, informed choice to activate.

That is consent architecture. And it is exactly what these courts have been asking. Did you warn users? Did you get meaningful consent? The answer, established now by two juries, is no.

Real age verification is the other demand with teeth. But it is also a hairball that will unwind a lot of the internet, security and privacy. That is for experts with more gravitas and knowledge than me.

Instagram’s Adam Mosseri knows what is coming. His year-end memo was full of language about authenticity, credibility signals, and platform responsibility. The same language is now showing up in court filings. At trial, he testified that there is always a tradeoff between “safety and speech.” That is a telling formulation. Safety as a constraint on the product, not a foundation of it. When I pushed on what Instagram is really positioning itself to become, he pushed back. But the architecture he described, who posts rather than what is posted, is exactly the consent architecture these courts are now demanding.

The practical problem for the platforms is simple. You cannot run two different algorithmic systems in one product without everyone noticing. Once you build the less addictive version for minors, the question becomes impossible to avoid. Why is that version not the default for everyone? Why is the more addictive version what adults get, when they never consented to it either?

The legal fight is about children. The logic does not stop at eighteen.

The tobacco parallel is everywhere in the coverage this week. It is the right one, but people are using it loosely. The important part of the tobacco story was not the $206 billion settlement. What changed behavior was what came alongside the money: mandatory disclosure of internal research, advertising restrictions, forced redesign. Joe Camel did not die because of a fine. Joe Camel died because a court said you cannot market this product to children, and you cannot keep making it more addictive than it already is.

The companies held out for decades. Denied the science. Attacked the researchers. Then they did not.

But here is where the parallel gets uncomfortable. Tobacco did not go away. It became Juul. I wrote about Juul back in 2018, when Silicon Valley was celebrating a $15 billion e-cigarette company as a tech startup. I called it Camel 2.0 then. Same addiction, new delivery mechanism, new investors, new PR. As a former smoker who nearly lost his life to cigarettes, I was livid. Tobacco companies figured out how to sell the same addiction in a cuter package, and Silicon Valley helped them do it.

Zuckerberg will do the same. He is too good at survival not to. The feeds may change form. The addiction machine will find a new name. Addiction is a lucrative business. It always finds a way.

Meta and YouTube have already announced appeals. They have the money and the lawyers to fight this for years. And they will. But discovery in each new case keeps producing documents. The internal research keeps surfacing. The bill keeps growing. Eventually it is cheaper to change than to fight.

Zuckerberg’s doctrine is simple: two steps forward, one step back, and make it appear as if he is changing. His superintelligence memo last July was the latest example. Talk about the future, avoid the present, and let the lawyers handle the rest.

That is the logic of the crowbar.

All eyes on Santa Fe on May 4. There just might be enough structural change. And if consent architecture is put in place, then we can all feel the glow, for a moment. Billions of people deserve a different default. Till the battle starts again. Like Juul.

March 25, 2026, San Francisco

PS: I want to apologize for sending two long emails in a single day. I had no intention of doing so. I was definitely not going to write about Meta or social media. I was thrilled to just nerd out about broadband today. However, I can’t help myself when it comes to Facebook and Zuckerberg and their shenanigans. I have been covering them since they were a tiny startup.

I completely agree with your pov.

I’ve experienced and seen a few of the things Snap, Instagram, TikTok are doing to our kids as they smoke Juuls in the school bathrooms administrators cannot and do not address. We had custody of my our granddaughter for two years and for two years I had to review her socials because of a court order.

Imagine a 14 year old’s snap homepage with reference link to her onlyfans website, another high schooler selling adderal, Mary Jane, fentanyl and other illegals on his TikTok obscuring it via vpn, or the girl who threatened to kill another girl for stealing a vape.

Having turned this over to school and local authorities saw little be done due to the platforms “privacy” right for the children.

All this for monetization, for eyeballs, for sale – at the cost of our children, their lives, their future, and our nation’s future.