Subscribe to discover Om’s fresh perspectives on the present and future.

Om Malik is a San Francisco based writer, photographer and investor. Read More

It was a long time ago when I started my love affair with optical networks and broadband. It started with covering long-forgotten companies such as UUNet Technologies and PSINet. It involved long-forgotten names such as Northern Telecom long before it become Nortel(s nt). So perhaps, you can’t fault me for feeling excited about reading the news that Alcatel-Lucent(s alu) Bell Labs identified an optical networking breakthrough that would theoretically allow sending 400 gigabits of data over a distance of 7,900 miles.

While the news of 400G didn’t excite me as much, the distance over which they could send the data impressed me, as it had implications that go beyond the oohs-and-aahs of raw speed. And what more, if this breakthrough can be commercialized — say in five to 10 years (considering companies like JDS Uniphase(s jdsu) would have to make components) — we could see a big improvement in not only our long-haul networks, giving them more oomph and making them more efficient, but it could also have a profound impact on our cloud future.

We certainly have come a long way

Back in 1995, I remember the excitement around Ciena’s(s cien) DWDM system. That same year, Nortel, the Canadian giant that at the time ruled the optical landscape, came out with a 10 Gbps offering. And then the bubble burst. Things slowed a little and we kept waiting for the long promised 40 Gbps technologies. In 2006, we saw the 40 Gbps speeds come to market, and by 2008 it was getting traction. In 2009, 100 Gbps gear came to market from Ciena.

To recap, here is the timeline of progress with optical networking technologies

400G and beyond

Since then, the attention has focused on the 400G systems. A lot has to do with marketing, but as my friend Andrew Schmitt, research analyst at Infonetics, points out: Anytime we have a 4x improvement in networking gear, things get interesting.

And so there’s no surprise why that is where all the attention is focused. In 2012 we saw a new software-programmable approach to network that upped the speeds to 400 Gbps. British Telecom(s bt) and Ciena recently showed off an experimental 800 Gbps network. Alcatel-Lucent and Telefonica have been working on their own high-capacity network experiments as well.

The latest development at Alcatel-Lucent Bell Labs is yet another example of continuous progress in the optical fiber technologies, especially over long distances. This improvement is one of the reasons we have seen an explosion in the bandwidth on the long-haul networks. According to Telegeography, a market research firm, the world saw an addition of 54 Tbps of capacity last year alone.

The irony of optics

But after my initial excitement had worn off, I was left asking myself the question: How much should we care about these breakthroughs? I mean it is not that we are bandwidth limited on these backhaul networks. We are doing really well in terms of transmission rates and have steadily boosted our ability to send signals over long distances. Sure, most optical networks in operation are either at 10 Gbps or 40 Gbps, but we are only a couple years from getting 100 Gbps everywhere. What is most amazing is that this 40x improvement on optical networks has resulted in sharp declines in bandwidth prices on the networks that connect data centers, office buildings, cities and countries.

A good proxy for the long haul and metro networking business is Cogent Communications(s ccoi), which operates one of the biggest networks on the Internet. The company claims that about 18 percent of the Internet traffic runs over Cogent’s pipes and that it has 28 percent of the market by bits and 12 percent of the market in terms of dollars.

At a Merrill Lynch conference, Cogent Communication CEO David Schaeffer pointed out that the “average price per megabit in the market has fallen at a rate of about 40% per year for a dozen years” even as the number of players has gone from 200 to 12. The prices on Cogent’s network during “period has fallen from $10 a megabit to the most recent quarter at $3.05 a megabit.”

He is not alone. Here is a little chart from 2012 about IP Transit prices that tells the story of falling prices.

Schaeffer estimates that the core internet transit business is about 400 petabytes a day of bandwidth and is “purchased at the core of the internet out of the 650 data centers is a $1.5 billion addressable market and it’s been flat at that level for a dozen years.”

I am guessing David won’t be struggling for business in coming years. The state of connectedness is going to create much stronger demand for a much beefier cloud-based infrastructure. And as everything in the world is digitized through sensors and embedded compute devices, we are going to see an explosion in ambient data that would travel between machines. These machines that sit in data centers will need big fat pipes.

We are in the early innings here but the idea of distributed processing and storage over these big fat pipes is something that should provide an exciting prospect for companies with big fiber pipes. Infonetics’ Schmitt argued that as optical bandwidth at the non-consumer level starts to become even more plentiful and prices start to stumble, we can start to learn to waste it. Instead of networks that use routers to shuttle data, we could start to build point-to-point connections, which are certainly more useful when doing high-level distributed computing.

The financial industry has already shown us the way — many hedge funds use special low-latency networks to process data and stay competitive. I wouldn’t be surprised if that becomes standard practice with all major businesses.

Last mile conundrum

Maybe I am being child-like in my thinking, but when I see the long-haul networks, I see technology and the free market trying to figure things out and in the end bringing bandwidth online at an unprecedented scale. And sure we are all benefiting from the dumb, crooked and complete craziness that went on during the boom that led to overbuilding of fiber networks, but it has been more than a decade and the dark fiber is being put to good use. (Just ask Google!)

In comparison to the long-haul and intra-city networks, the world of last-mile connections has moved at a somewhat glacial speed. Here in the U.S., while we have seen rapid improvements in speeds (from a 1 Mbps connection at the turn of the century to about 25 Mbps (average) from cable and phone companies,) they are not as astounding. A lot of that is due to the lack of competition in our access networks — controlled primarily by oligopolies such as AT&T(s t), Verizon(s vz)(s vod) and Comcast(s cmcsa).

Across the world things are different. The Chinese are starting afresh. The Japanese and the Koreans went for the fiber early and Europeans have the advantage of the short loops, which allows them to milk the copper and make 100 Mbps connections a reality. The European competitive landscape is such that fiber to the home is becoming less of a rarity.

But let’s just face it, when it comes to the U.S., the last mile is less about the technology and more about politics and lack of competition. Whenever there is competition — Chattanooga (TN), Kansas City (KS), Austin (TX) and Vermont — things start to change. Speeds go up. Service improves and incumbents are hustling for business. Unfortunately those pockets of competition are way too rare.

In the U.S., we have never had real competition — the 1996 Telecommunication Act was a mirage and faulty from the outset. It never allowed anyone with a real chance to compete and disrupt. Thankfully, it is a distant memory, and a reminder of how Washington really works (by not working.) And that’s our broadband future — held hostage by a political and regulatory system that is in bed with those it is supposed to regulate.

That sense of disillusionment, however isn’t going to stop me from getting excited about the new optical breakthroughs. Who knows…!

P.S.: My colleague Stacey Higginbotham wrote about the need for different thinking from ISPs. Hope you get a chance to read it.

The solution is open/equal access in layers 1/2. Worked in LD, data & cellular in 80s-90s & got us to where we are. 802.11, an unintentioned form of equal access got us the rest of the way. Still bandwidth remains 20-150x overpriced depending on where you are in the stack, because of remonopolization 10 years ago. Failure of Telecom Act & govt policy has been not applying equal access evenly to all incumbents AND newcomers that receive RoW or frequency.

There’s actually no compelling case for gigabit to the home anytime soon, and the math of aggregation suggests that middle mile and core links can’t support it right now in any case. Most long-haul interconnects are still in the 10 – 40 gig range right now, so how many gigabit homes can they really handle?

The cable company’s coax wires can scale up to 10 Gbps, and they’re already pumping 3 – 4 Gps counting all the DTV programming they carry. The ultimate mix between TV and broadband is up to the consumer, as the cable company is in the business of making money and they will respond to actual consumer demand. Blog posts, not so much, however.

The 19 million miles of fiber the U. S. is installing every year goes primarily into middle mile and other commercial aggregation points, which is to say it goes where the demand is. We laid all this out in http://www.itif.org/publications/whole-picture-where-americas-broadband-networks-really-stand, and most of the serious policy makers in DC agree with us.

Actually, the compelling case is that most folks will switch to gigabit service in their homes if it is priced at a fair mark-up from the cost of providing it. Everyone knows this. No more 95% profit margin nonsense. If Google can break even providing gigabit fiber to the home for $70/mo, including the cost of building the infrastructure of the new network, then AT&T is doing something quite wrong when they charge $51/mo for 12Mbps, which is two orders of magnitude slower, over an infrastructure paid for by the taxpayers decades ago. At that price per Mbps, AT&T would charge $4,233.00/mo for a Gbps connection. That’s going to start dawning on millions of Internet customers really soon now. The cartel’s illusion of expensive and scarce bandwidth is about to collapse forever.

And the thing about interconnects is, they get laid in bundles, and those bundles are tied together into virtual circuits of capacities equal to the aggregate capacity. There are many alternate routes for bottle-necked traffic to follow along other bundles. The max capacity of any one fiber is NOT the max speed of the Internet, and YOU KNOW THAT.

Anyway, no-one has to wait for the middle mile to bulk up before offering ubiquitous gigabit fiber to the home. You conflate an “absence of demand” with the industry’s concerted effort to keep demand down. They already get as much money as customers are willing to pay for any service. It’s more accurate to say that companies are in the business of making money as cheaply as possible. It’s more accurate to say that Internet providers have resisted a little modest spending on dramatic upgrades, despite the customer’s keen interest in it. They’re shameless, they don’t listen to customers or blogs, and they have no fears about regulation or competition due to the corruption of our system. They’ll keep milking the legacy infrastructure for top dollar, until they have no choice but to upgrade (or they make good on existing plans to get out of the wireline business).

So Richard, because we destroyed LD competition and remonopolized the mid-mile 11 years ago by “deregulating” it, you say we can’t invest at the edge because we would overwhelm the core? Now you have indeed gone too far! The logic is disingenuous at best and twisted at worst. If there were true competition in mid-mile (not the competition that partially exists for 20-30% of the higher-end commercial market) then retail bandwidth pricing would reflect marginal cost and be 90%+ below where it is today and still generate MORE revenue.

History tells us this pricing elasticity of demand, which occurs in 3 flavors (normal, private to public shift, and application), won’t happen with average, high-priced, vertically integrated carriers. It didn’t happen between 1913-1983 and it has only happened in part since 2002 because of “open access” wifi and the remnants of the 3 digitization waves of the 1980-90s. Time to throw out 100 years of false network doctrine.

“average price per megabit in the market has fallen at a rate of about 40% per year for a dozen years” even as the number of players has gone from 200 to 12. The prices on Cogent’s network during “period has fallen from $10 a megabit to the most recent quarter at $3.05 a megabit.”

There’s a huge math error here. If the prices dropped 40% a year, the price per Mbit would be $6 after the first year, $3.60 after the second, $2.16 after the third… A lot less than $3 in a dozen years.

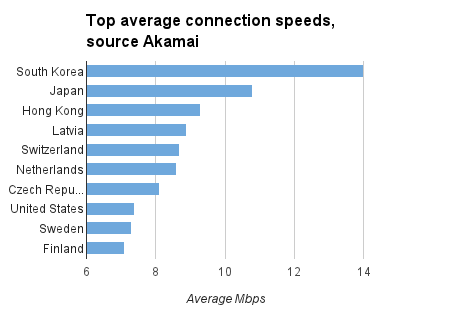

BTW, the slide from Akamai has a misleading legend. It’s not “Top Average Connection Speeds”, it’s average TCP connection speeds, where the capacity of the wire is divided first by the number of users sharing an IP address and then by the number of concurrent TCP connections each user has open. Akamai measures an “Average Peak Connection Speed” that more accurate measures network capacity. For the US, avg. peak is 30 Mbps and change.

Not to promote my work too much, I wrote a blog post on Akamai’s figures reflecting extensive discussions with them on what the figures mean: http://www.innovationfiles.org/u-s-broadband-speed-slightly-better-in-latest-akamai-report/

Carry on.

I would like to know, where do you have that “Top average connection speed” (source Akamai from. I have tried to fiund it, but not being succesful. I wouldlike to know, when this was searched and what is the methodology behind…

Richard, thanks for underscoring my point about open access at layers 1-2. Sharing goes away because there is always a tradeoff between layers 1, 2 and 3. The cost of components (moore’s law) are constantly dropping while the network effect (metcalfe’s law) is constantly generating new ecosystems of demand and hence revenue growth to amortize continual investment in those layers. The carriers can’t keep up, so they price higher as the lose more demand in the upper and lower portions of the demand curve. Let’s see how much longer the vertically integrated service provider stack holds up to OTT, WebRTC, SON/hetnet and SDN/openflow. And that’s before the impact of Google’s optical switch in the datacenter hits the public nets.

Open Access at layer 1 & 2 depresses investment in faster networks, Google doesn’t offer it, despite early claims that they would.

There’s no middle mile monopoly in the US, we have a healthy independent fiber business that’s installing 20 million miles of fiber per year.

Om – Interesting observations on the continuing bandwidth growth in transmission networks and, as you note, JDSU will indeed enable this by not only developing and supplying high performance photonic components, but also through test and measurement solutions. But the real story is actually a little hidden. Yes, the demand for bandwidth and 400G speeds continues but the key challenge for service providers is the ability to apply the right bandwidth at the right time – i.e., be intelligent. Applications’ requirements differ: web browsing and file sharing may demand high bandwidth, but aren’t latency- or jitter-sensitive. Something like streaming video is extremely sensitive to the way bandwidth is delivered and it is precisely these services from which the operators need to capture revenue. It is actually relatively simple to throw raw bandwidth and capacity at the network with 100G and 400G speeds, but without continuous agility and intelligence to know which users are using which services – and when – more congestion and a declining customer experience will prevail. With the added capacity of 400G on the horizon, that will only grow more important.